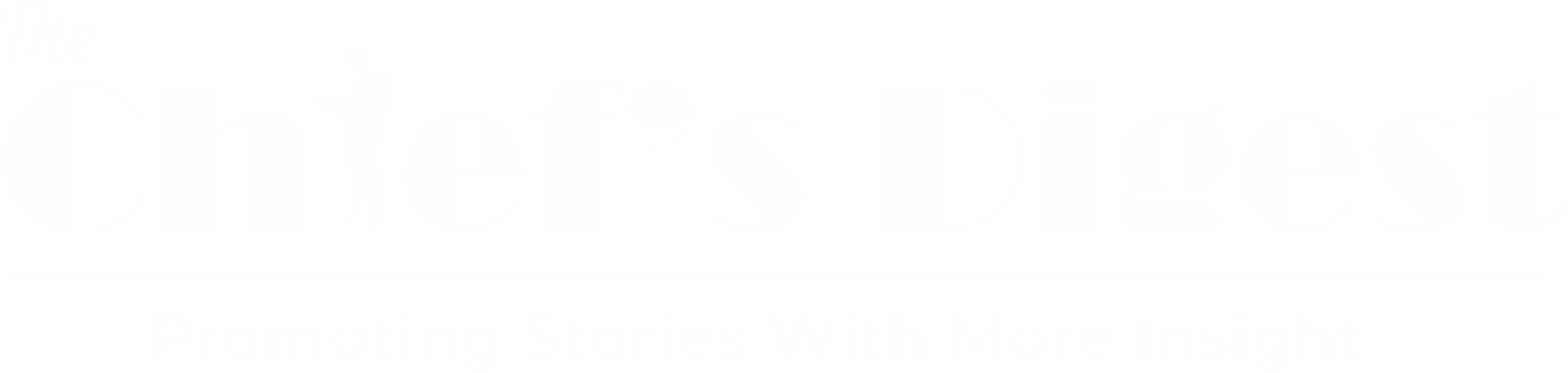

Google has shown off an artificial intelligence system that can create images based on text input. The idea is that users can enter any descriptive text and the AI will turn that into an image. The company says the Imagen diffusion model, created by the Brain Team at Google Research, offers “an unprecedented degree of photorealism and a deep level of language understanding.”

Google’s Imagen Diffusion Model

This isn’t the first time we’ve seen AI models like this. OpenAI’s DALL-E (and its successor) generated headlines as well as images because of how adeptly it can turn text into visuals. Google’s Imagen diffusion model, however, tries to create more realistic images.

To assess Imagen against other text-to-image models (including DALL-E 2, VQ-GAN+CLIP and Latent Diffusion Models), the researchers created a benchmark called DrawBench. That’s a list of 200 text prompts that were entered into each model. Human raters were asked to assess each image. They “prefer Imagen over other models in side-by-side comparisons, both in terms of sample quality and image-text alignment,” Google said.

Like DALL-E, Imagen diffusion model is not available to the public. Google doesn’t think it’s suitable as yet for use by the general population for a number of reasons. For one thing, text-to-image models are typically trained on large datasets that are scraped from the web and are not curated, which introduces a number of problems.

AI Encodes Social Biases

“While this approach has enabled rapid algorithmic advances in recent years, datasets of this nature often reflect social stereotypes, oppressive viewpoints, and derogatory, or otherwise harmful, associations to marginalized identity groups,” the researchers wrote. “While a subset of our training data was filtered to removed noise and undesirable content, such as pornographic imagery and toxic language, we also utilized LAION-400M dataset, which is known to contain a wide range of inappropriate content including pornographic imagery, racist slurs and harmful social stereotypes.”

As a result, they said, Imagen diffusion model has inherited the “social biases and limitations of large language models” and may depict “harmful stereotypes and representation.” The team said preliminary findings indicated that the AI encodes social biases, including a tendency to create images of people with lighter skin tones and to place them into certain stereotypical gender roles. Additionally, the researchers note that there is the potential for misuse if Imagen were made available to the public as is.